Gone are the days when data could be neatly stored within static databases and queried at leisure. The digital era has ushered in an unprecedented surge in data generation, originating from a multitude of sources such as IoT devices, social media interactions, and customer behaviors, just for starters.

With companies deploying AI applications to optimize existing processes and open new lines of business, the velocity and variety of data have escalated, forcing organizations to adopt a modern data architecture, consisting of streaming data movement and transformations via data pipelines that feed cloud data lakehouses.

Data scientists and analysts rely on the capabilities of data engineers to ensure the flow of trustable data to feed their models.

But these teams typically use products to monitor data at rest. That means that errors are not detected until the data has already landed and been transformed, and then is made available for analysis or becomes part of a data set that is training an AI model. Once discovered, it can take weeks or months to undo the damage. Or worse, it is never found.

Data Engineering teams attempt to keep up by adopting multiple open-source tools and frameworks to monitor data pipelines, runs, and tasks. More tools is not always better, as the configured alerts from all of these applications create a flood of noise, making it difficult to discern which alerts require immediate attention.

A modern data architecture needs a modern approach to eliminate such issues. That approach is Continuous Data Observability.

Products like Databand support Continuous Data Observability and integrate with the technologies found in the modern data architecture, such as Spark, Airflow, dbt, Python, and Snowflake, just to name a few.

The platform starts by collecting metadata from those technologies to create a historical baseline to understand what common pipeline behavior looks like. Based on its own ML models, it can determine if a pipeline is deviating from standard behavior in real-time, and alert you on anomalies that include processing duration, schema change, or the handling of null values.

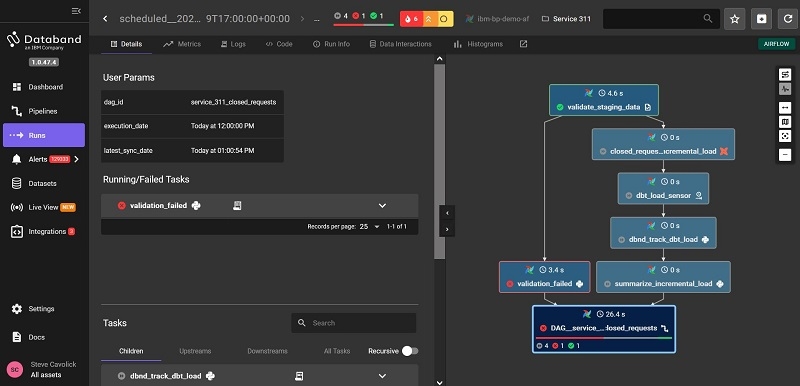

The image above is a screenshot of the Databand console showing a data pipeline run with errors and alerts.

You can govern the sensitivity of the anomaly reporting if you feel you are receiving too many or too few alerts, further helping you pinpoint and focus on the issues most critical to keeping your pipelines running error-free.

In addition, you can set alerts based on custom rules or validations that are specific to your business. For example, if you know that within a given dataset, the values of a particular column should fall between 5 and 10, you have the option to configure that into the system and have it alert you if data falls outside of that range.

The final part of the platform involves reducing Mean Time To Resolution (MTTR) by creating smart workflows to remediate some of the alerted issues and maintain your data SLAs.

Continuous Data Observability provides the following business value:

- Detect Earlier: Pinpoint unknown data incidents and reduce Mean Time To Detection (MTTD) from days to minutes.

- Resolve Faster: Improve MTTR with incident alerts and intelligent routing from weeks to hours.

- Deliver Trustworthy Data: Enhance reliability and data delivery SLAs, and provide visibility into pipeline quality issues that would otherwise be undetected.

Just like every airline pilot needs a reliable navigator, every data-driven organization deserves the power of real-time insights to catch data issues before they create costly impacts to your business, reputation, and AI aspirations. Please contact us if you are interested in learning more about how LRS can help you achieve continuous data observability.

About the Author

Steve Cavolick is a Senior Solution Architect with LRS IT Solutions. With over 20 years of experience in enterprise business analytics and information management, Steve is 100% focused on helping customers find value in their data to drive better business outcomes. Using technologies from best-of-breed vendors, he has created solutions for the retail, telco, manufacturing, distribution, financial services, gaming, and insurance industries.